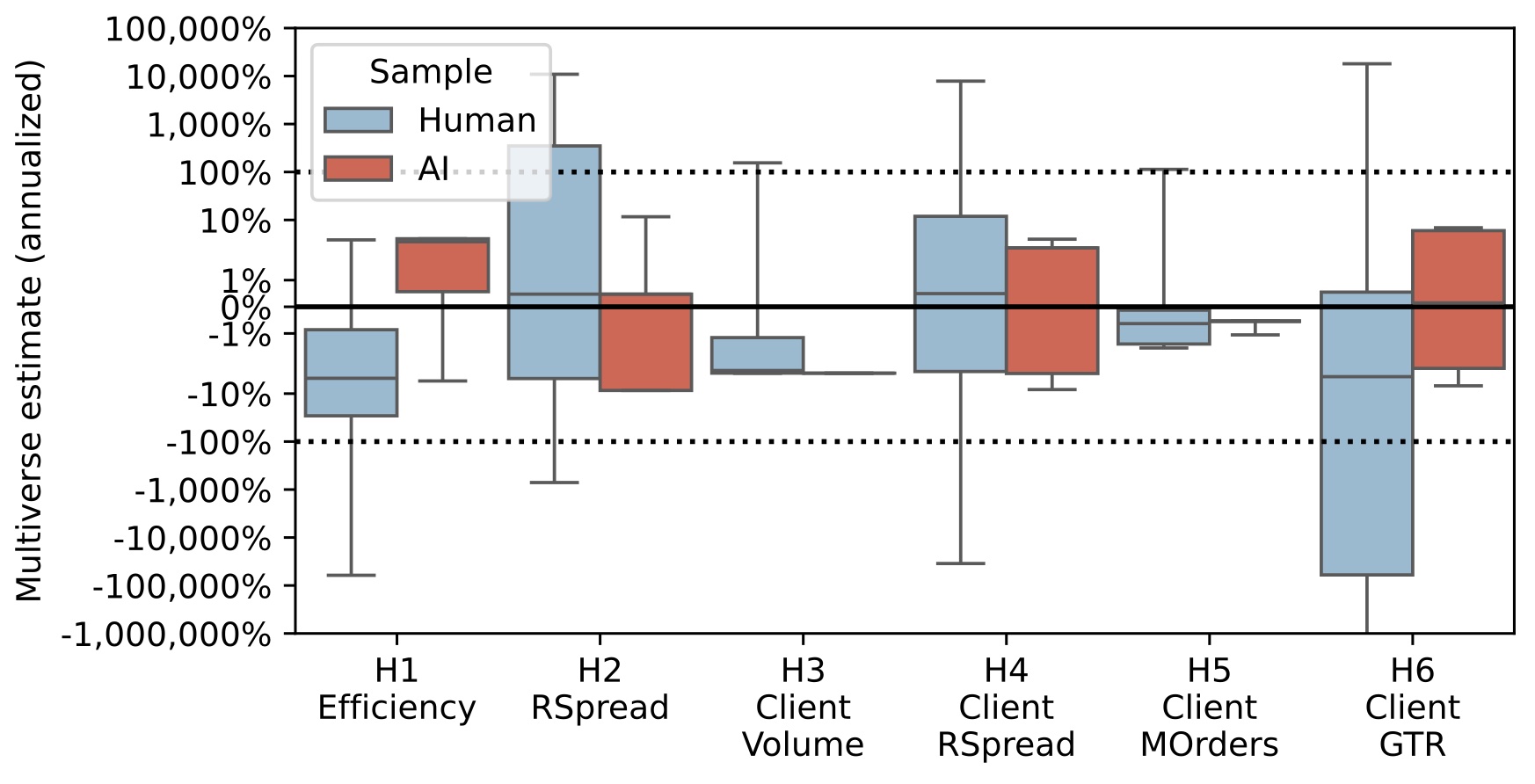

As researchers increasingly seem to use AI to do their analyses, one wonders to what extent AI outcomes differ from the pre-AI era of human outcomes. One can only find out if there somehow is a “gold standard” of human outcomes to compare to. This is where the #fincap experiment is extremely useful. It had 164 human teams independently estimate six annual trends on the same sample in a pre-AI era.

In a new paper with Wenqian Huang and Shihao Yu, we give AIs the same set of instructions as the humans got in #fincap. The two main questions we ask are:

The box plot summarizes, for each of the six trends, the distribution of percentage-annual-change estimates.1 The blue box plots are based on 164 estimates of the human teams. The red box plots correspond to 158 AI estimates. The box stretches from the first to the third quartile, with the horizontal line indicating the median. The whiskers extend to the 2.5% and 97.5% quantile.

The plot, along with an analysis of key forks that drive a wedge between the two distributions, led to the following insights:

This final point is visible in the box plot distribution where the negative tail can reach out to exceed -100,000% for humans. This is not happening for AIs. One could say that this is a benevolent type of AI error. Yes, it is the source of a wedge between AI and human distributions, but it undoes a bias.

“You can tell it’s AI. No left tail.”

Please find the paper here.

This is Figure 3 in the paper. It presents estimates after projecting actual analysis paths onto a smaller set of paths constructed by considering only key decision forks. This smaller set is taken from the signature output of #fincap, which is the Nonstandard Errors paper, published in the Journal of Finance. A two-minute summary of the article is here. ↩︎